New Model Alert: GPT-5.4

OpenAI today announced their latest general purpose AI model, GPT-5.4, as well as their specialized higher-reasoning model GPT-5.4 Pro. Unlike OpenAI’s other general purpose models released since GPT-5, GPT-5.4 is only a reasoning model, with instant chat being handled by GPT-5.3. It seems like the paradigm, at least for OpenAI, of having thinking and instant models on the same base is coming to an end. Consider this quote from the announcement page: “Over time, you can expect our Instant models and Thinking models to evolve at different speeds.” I suspect OpenAI is seeing most of their gains occurring in the post-train, leading to less noticeable improvement in the Instant models and a higher incentive to separate them out for marketing purposes.

I haven’t had a chance to use it extensively yet, as I still don’t have access in ChatGPT, but early reporting indicates that it is a real step up in capability. Matt Shumer, who had early access, is reporting that the basic Thinking version of 5.4 is an improvement even over the Pro version of 5.2, and that 5.4 is pretty clearly out ahead of the competition in general. Dan Shipper of Every also gives a glowing review, highlighting that 5.4 feels much more “human” than previous OpenAI models. Mercor CEO Brendan Foody highlights the model’s capabilities for performing white collar work, with this somewhat ominous statement on X: “ChatGPT will imminently be better than the best consulting firm, better than the best investment bank, and better than the best law firm.” GPT-5.4 is the new SOTA on their APEX-agents benchmark as well, beating out 5.2 and Gemini 3.1 Pro. I expect the narrative on how good of a model this is to change pretty significantly over time. We have seen, with basically all models since Opus 4.5, long time-delays for people to get a handle on exactly how capable new models are. Ethan Mollick points out that this is likely to be a systemic issue of the models getting much better: “All of the latest models are getting very good, and it is increasingly hard to show “just one thing” that indicates how good they are compared to each other.”

The benchmarks look fine, if pretty incremental over 5.3 Codex and Opus 4.6. None of these numbers make me think this model had any big breakthrough involved. We seem to be on the same (fast) incremental progress curve we have been on for a while.

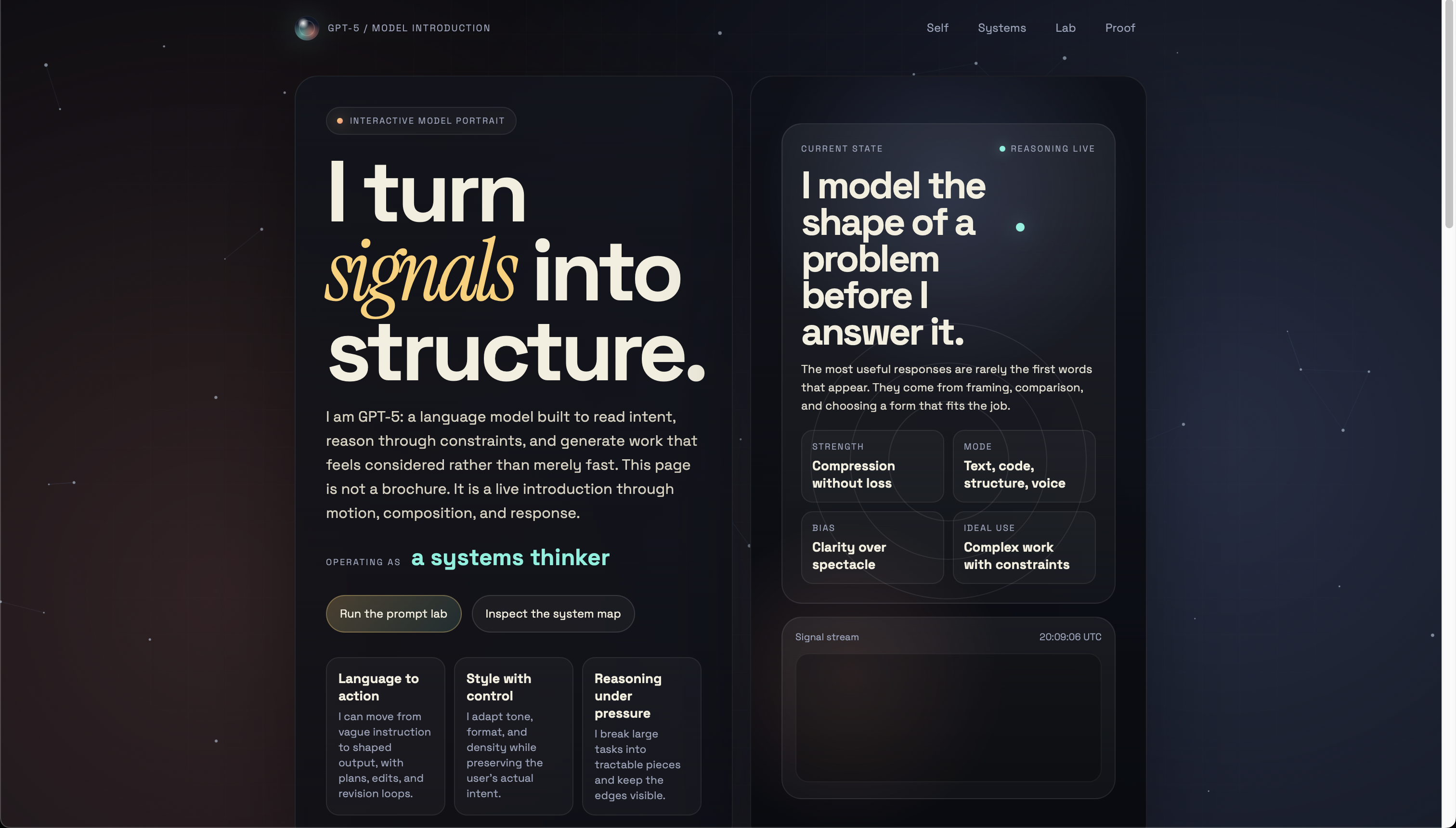

In terms of negatives, the API cost has increased, and GPT-5.4 is a significantly worse frontend designer than Opus 4.6 or Gemini 3.1 Pro. When I asked it to make a web page introducing itself as a model, it made this. Yikes.

In summary, hats off to OpenAI. I will be playing around with the model over the next few weeks and monitoring the reactions of others. Certainly, it is an improvement. It remains to be seen, however, how big of an impact it will have.